Why algorithms may be making political polarization worse than we think

Recommendation systems do not simply organize content; they help organize perception. Marc Torrens and Carlos Carrasco-Farré analyze how algorithms influence opinions and heighten our emotional responses.

Imagine opening your preferred online platform to watch political news. You are not looking for extremism. You are not trying to deepen a conflict. You click on one video, then another, then another. And gradually, the political world you're presented with starts to shift. Your own political side looks sharper, more certain, more emotionally charged. The other side looks more extreme, more provocative, more threatening. The platform has not told you what to believe. But it may have quietly changed the emotional map of politics around you.

In other words, recommendation systems do not simply organize content. They help organize perception. Moreover, it is increasingly difficult to consume online content that is not mediated by recommender systems or personalization algorithms. We consume what algorithms filter and prioritize for us.

Recommendation algorithms influence which voices are perceived as representative, which positions seem normal, and which opponents appear most visible. In the political sphere, that can have consequences far beyond screen time. It can shape how citizens understand disagreement, how conflict escalates, and how democratic life is experienced in practice. In that sense, the algorithm is not only narrowing what we see. It may also be heightening its emotional temperature.

What appears on our screens is not a neutral sample of public life. It is the output of technical, commercial, and organizational choices about what deserves to be amplified. And those choices can shape how citizens imagine opponents, how conflict feels, and how democratic debate unfolds. It matters because millions of people now encounter politics through systems designed first and foremost to hold attention. These algorithms are not inherently designed with political intent, but just to maximize the frequency and duration of user interaction. They are economically driven systems.

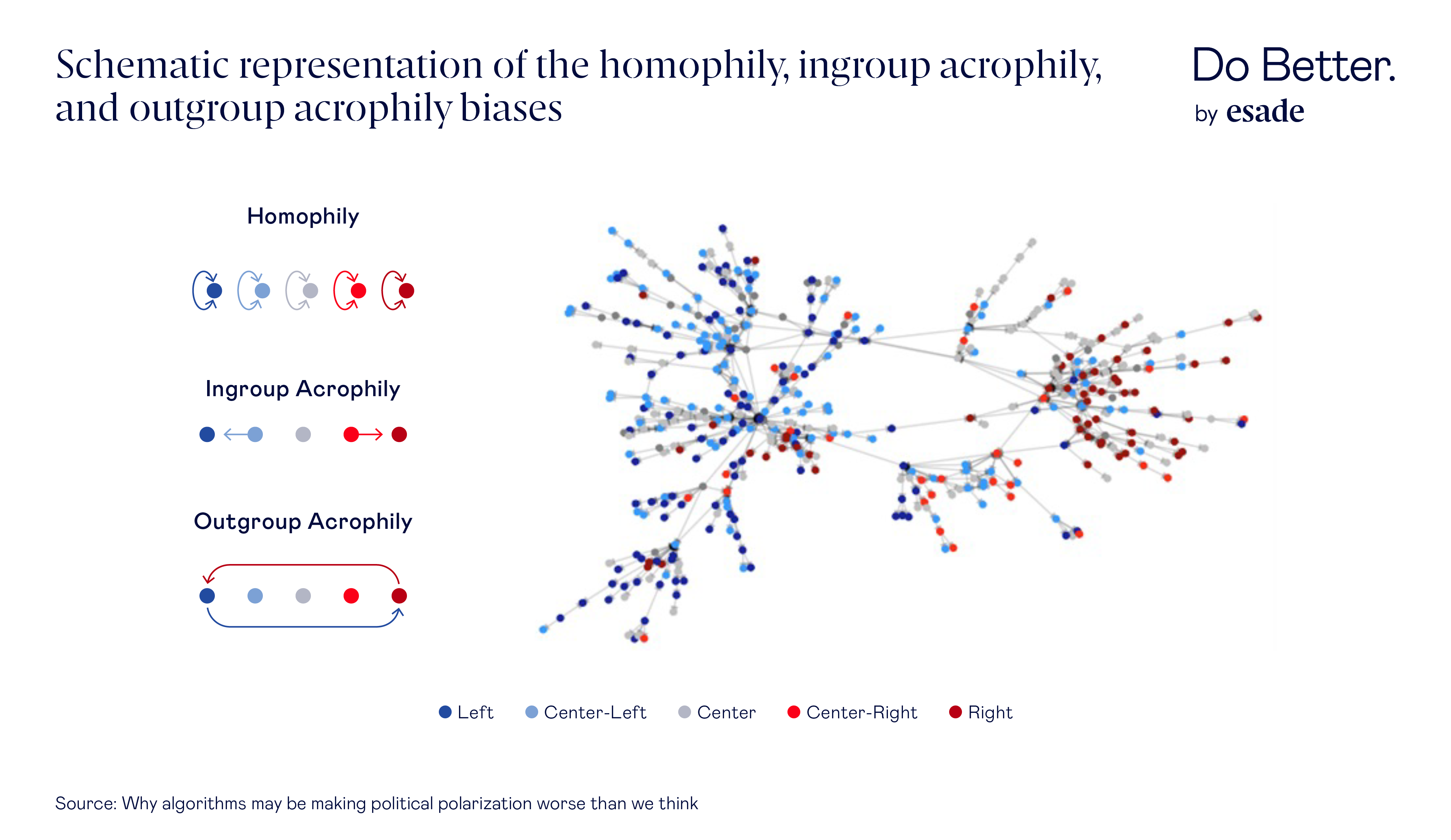

Associate Professor of the Department of Operations, Innovation & Data Science at Esade, Marc Torrens, and Data Science Professor at TBS Education, Carlos Carrasco-Farré, conducted a study in which they analyzed YouTube’s political recommendation network using more than 300,000 videos and roughly 1.7 million recommendation links. Their question was simple: when someone watches political content, what kind of political content does the platform lead them to next? The answer was troubling: recommendation patterns can favor ideological extremity in two different ways. One, by steering users toward more extreme content within their own political views. Two, by disproportionately exposing them to extreme content from the opposing political view.

This research results have been published in the Journal of Management Information Systems.

The algorithm does not just mirror us; it structures what conflict looks like

The usual defense of recommender systems is that they merely reflect user preferences. People click, platforms learn, and the machine gives them more of what they seem to want. But Torrens and Carrasco-Farré findings suggest something different is also happening.

Acrophily begins as a social tendency: people often orient toward stronger, more emotionally resonant expressions of group identity. Yet on digital platforms, that tendency does not remain purely human. It becomes organized, repeated, and scaled by algorithms. In that sense, recommender systems do not simply mirror polarization. They can give it shape. They can turn a psychological human tendency into a recurring feature of the information environment.

This is especially important because a healthy democratic environment does not require everyone to agree. But it does require that disagreement remains intelligible. Once platforms repeatedly show citizens the most emotionally explosive version of political opponents, they are not broadening understanding. They are training attention toward confrontation and polarization.

Two roads to polarization, not one

The first road is familiar enough. A user watches content close to their own worldview, and the system nudges them toward a sharper, harder-edged version of that same worldview. In our study, this appeared clearly in the pathway from Center-Right content to more extreme Right content. This is ingroup acrophily: not simply staying among like-minded voices, but being pulled toward the most intense ones.

The second road is less obvious, and arguably more dangerous. A user is not only shown material from their own side; they are also fed extreme content from the other side. Not moderate disagreement. Not constructive opposition. The most emotionally charged and ideologically distant version of the outgroup. Both researchers found this pattern for both Left-to-Right and Right-to-Left recommendation flows. This is outgroup acrophily.

That may sound abstract, but the everyday implication is easy to grasp. Suppose someone starts with mainstream or moderately partisan content. Instead of being guided toward a wider range of serious viewpoints, the system may pull them toward the most intense voices nearby and the most inflammatory voices across the political spectrum. The result is a distorted political field: your allies appear more radicalized, your opponents more outrageous, and the middle harder to see. This is not just more information. It is a different social reality.

But why does this matter? Because exposure to difference is not always the same thing as exposure to understanding. A healthy information environment might introduce citizens to moderate alternatives, unexpected common ground, or serious disagreement that invites reflection. But when a platform repeatedly shows people the most extreme caricature of their opponents, it can deepen hostility rather than broaden perspective. The result is not deliberation. It is a confrontation optimized for attention.

Emotion as part of the mechanism

One of the most striking findings from the study is that these recommendation patterns are bound up with emotion. The content associated with acrophilic bias was not merely ideological. It was often emotionally loaded.

For ingroup acrophily, emotions such as anger and uncertainty appeared especially relevant, often in relation to institutions and moral issues. For outgroup acrophily, the emotional mix shifted toward disgust and sadness, particularly regarding culture, values, identity, and other socially charged themes. In practical terms, this means that the algorithm is not only sorting content by topic or ideology. It may also be sorting by emotional intensity.

That helps explain why these recommendation patterns can feel so powerful. High-arousal political content is memorable. It invites reaction. It sharpens the boundary between “us” and “them.” And in an attention economy, that makes it valuable, not necessarily because it informs people better, but because it keeps them engaged.

Emotion is not a side effect of digital politics; it is one of its engines. High-arousal negative content attracts attention, drives engagement, and sustains platform activity. But what is good for engagement is not always good for public life. A system optimized to maximize reaction may end up privileging outrage over nuance and hostility over understanding.

Why ordinary users should care

It is tempting to think this is mainly a technical issue for platform designers or regulators; It is not. It affects anyone who gets political information from digital platforms, which today means most of us.

When recommendation systems systematically amplify more extreme ingroup and outgroup narratives, they do more than shape content consumption. They shape social identity cues. They influence how people perceive what “their side” stands for, what the “other side” is like, and what kind of political behavior seems normal. Over time, that can harden group boundaries, increase hostility, and make compromise or common ground feel naïve or even dangerous.

This is why the issue reaches beyond YouTube. Recommender systems are now central infrastructures of public attention. They mediate news, commentary, culture, and everyday political sense-making. If their internal logic rewards the most emotionally polarizing content, then polarization is not simply emerging from society. It is being patterned by the architecture of exposure itself.

That has consequences for misinformation, trust, public debate, and social cohesion. If the digital environment consistently rewards the loudest, most antagonistic, and most emotionally provocative content, then the democratic discourse becomes harder to sustain. Citizens do not only consume politics through these systems. Increasingly, they learn what politics is through them. That alone should matter to anyone concerned with how societies form opinions in the digital age.

What should change

If platforms help structure ideological conflict, then they also bear some responsibility for reducing its most damaging forms. That does not mean eliminating political disagreement or forcing artificial balance into every feed. It means recognizing that engagement should not be the only value built into recommendation systems.

The first step is to stop treating recommendation systems as neutral pipes. They are not passive mirrors of public preferences. They are sociotechnical actors that help define what becomes visible, salient, and emotionally resonant. That means they should be designed and governed with public consequences in mind.

The study findings point toward several practical responses. Platforms could adopt diversity-aware recommendation models that reduce the overpromotion of high-arousal, conflict-laden political content. They could give users clearer explanations of why particular content is being recommended and offer tools to understand the ideological composition of their feeds. And regulators or independent auditors could evaluate whether recommendation systems are disproportionately amplifying extreme or misleading political material.

None of this would eliminate disagreement. Nor should it. Democratic societies need disagreement. But there is a difference between disagreement and distortion. There is a difference between exposure to political differences and repeated exposure to the most inflammatory version of political differences. A better digital environment would not shield people from conflict. It would stop engineering conflict as the default mode of attention.

The deeper lesson is simple. The danger is not only that algorithms give us more of what we already believe. It is that they may teach us to see politics through escalation: a more extreme version of our own side, and a more monstrous version of everyone else. Once that happens, polarization is no longer just a design problem. It becomes a social problem too.

Associate Professor, Department of Operations, Innovation & Data Sciences at Esade

View profile- Compartir en Twitter

- Compartir en Linked in

- Compartir en Facebook

- Compartir en Whatsapp Compartir en Whatsapp

- Compartir en e-Mail

Do you want to receive the Do Better newsletter?

Subscribe to receive our featured content in your inbox.